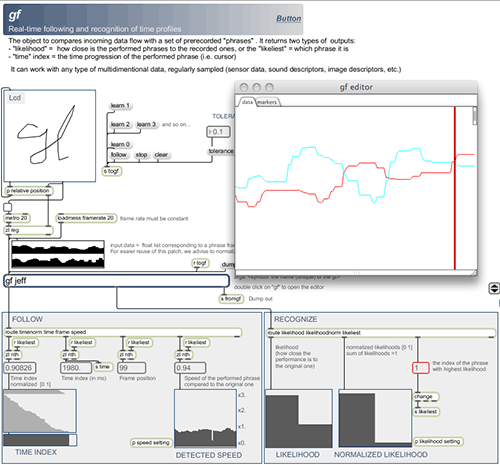

Real-time following and recognition of time profiles

The Gesture Follower allows for real-time comparison between a gesture performed live with a set of prerecorded examples. The implementation can be seen as an hybrid between DTW (Dynamic Time Warming) and HMM (Hidden Markov Models).

In most standard gesture recognition systems, gestures are considered as units that must be recognised once completed. Therefore, these systems output results at discrete time events, typically at the end of each gesture.

The Gesture Follower corresponds to a different interaction paradigm motivated by applications on expressive visuals and sound control: the system outputs “continuously” (i.e. on a fine temporal grain) parameters characterising the performed gesture.

Precisely, two types of information are continuously updated. These are probabilistic estimations of

1) the similarity of the performed gesture to prerecorded gestures (likelihood) and

2) the time progression of the performed gesture. The first type of information allows for the selection the likeliest gesture at any moment and the second type of information allow for the estimation of the current temporal index inside the gesture, referred here as “gesture following”.

These continuous output data are especially well suited for both selecting and synchronizing various continuous visual or sound processes to gestures.

The Gesture Follower is a system for real-time following and recognition of time profiles. In this example the Gesture Follower learns three gestures, i.e drawings using the mouse, while we simultaneously record voice data.

During the “performance”, the Gesture Follower recognizes which gesture is being performed, and plays the corresponding sound, time stretched or compressed depending on the pacing of the gesture.

The gesture follower is used to select and synchronize prerecorded videos, following the dancer gestures. It uses data from inertial sensors worn on the wrists of the dancer

The gf external is now part of the “MuBu for Max” distribution you can access directly here:

http://forumnet.ircam.fr/shop/en/forumnet/59-mubu-pour-max.html

Please post any comment, bug report, feature request to the forumnet.ircam.fr

http://forumnet.ircam.fr/user-groups/mubu-for-max/